注意

点击 这里 下载完整示例代码

音频 I/O¶

torchaudio 集成了 libsox 并提供了一组丰富的音频输入/输出功能。

# When running this tutorial in Google Colab, install the required packages

# with the following.

# !pip install torchaudio boto3

import torch

import torchaudio

print(torch.__version__)

print(torchaudio.__version__)

Out:

1.10.0+cpu

0.10.0+cpu

准备数据和实用函数(跳过此部分)¶

#@title Prepare data and utility functions. {display-mode: "form"}

#@markdown

#@markdown You do not need to look into this cell.

#@markdown Just execute once and you are good to go.

#@markdown

#@markdown In this tutorial, we will use a speech data from [VOiCES dataset](https://iqtlabs.github.io/voices/), which is licensed under Creative Commos BY 4.0.

import io

import os

import requests

import tarfile

import boto3

from botocore import UNSIGNED

from botocore.config import Config

import matplotlib.pyplot as plt

from IPython.display import Audio, display

_SAMPLE_DIR = "_assets"

SAMPLE_WAV_URL = "https://pytorch-tutorial-assets.s3.amazonaws.com/steam-train-whistle-daniel_simon.wav"

SAMPLE_WAV_PATH = os.path.join(_SAMPLE_DIR, "steam.wav")

SAMPLE_MP3_URL = "https://pytorch-tutorial-assets.s3.amazonaws.com/steam-train-whistle-daniel_simon.mp3"

SAMPLE_MP3_PATH = os.path.join(_SAMPLE_DIR, "steam.mp3")

SAMPLE_GSM_URL = "https://pytorch-tutorial-assets.s3.amazonaws.com/steam-train-whistle-daniel_simon.gsm"

SAMPLE_GSM_PATH = os.path.join(_SAMPLE_DIR, "steam.gsm")

SAMPLE_WAV_SPEECH_URL = "https://pytorch-tutorial-assets.s3.amazonaws.com/VOiCES_devkit/source-16k/train/sp0307/Lab41-SRI-VOiCES-src-sp0307-ch127535-sg0042.wav"

SAMPLE_WAV_SPEECH_PATH = os.path.join(_SAMPLE_DIR, "speech.wav")

SAMPLE_TAR_URL = "https://pytorch-tutorial-assets.s3.amazonaws.com/VOiCES_devkit.tar.gz"

SAMPLE_TAR_PATH = os.path.join(_SAMPLE_DIR, "sample.tar.gz")

SAMPLE_TAR_ITEM = "VOiCES_devkit/source-16k/train/sp0307/Lab41-SRI-VOiCES-src-sp0307-ch127535-sg0042.wav"

S3_BUCKET = "pytorch-tutorial-assets"

S3_KEY = "VOiCES_devkit/source-16k/train/sp0307/Lab41-SRI-VOiCES-src-sp0307-ch127535-sg0042.wav"

def _fetch_data():

os.makedirs(_SAMPLE_DIR, exist_ok=True)

uri = [

(SAMPLE_WAV_URL, SAMPLE_WAV_PATH),

(SAMPLE_MP3_URL, SAMPLE_MP3_PATH),

(SAMPLE_GSM_URL, SAMPLE_GSM_PATH),

(SAMPLE_WAV_SPEECH_URL, SAMPLE_WAV_SPEECH_PATH),

(SAMPLE_TAR_URL, SAMPLE_TAR_PATH),

]

for url, path in uri:

with open(path, 'wb') as file_:

file_.write(requests.get(url).content)

_fetch_data()

def print_stats(waveform, sample_rate=None, src=None):

if src:

print("-" * 10)

print("Source:", src)

print("-" * 10)

if sample_rate:

print("Sample Rate:", sample_rate)

print("Shape:", tuple(waveform.shape))

print("Dtype:", waveform.dtype)

print(f" - Max: {waveform.max().item():6.3f}")

print(f" - Min: {waveform.min().item():6.3f}")

print(f" - Mean: {waveform.mean().item():6.3f}")

print(f" - Std Dev: {waveform.std().item():6.3f}")

print()

print(waveform)

print()

def plot_waveform(waveform, sample_rate, title="Waveform", xlim=None, ylim=None):

waveform = waveform.numpy()

num_channels, num_frames = waveform.shape

time_axis = torch.arange(0, num_frames) / sample_rate

figure, axes = plt.subplots(num_channels, 1)

if num_channels == 1:

axes = [axes]

for c in range(num_channels):

axes[c].plot(time_axis, waveform[c], linewidth=1)

axes[c].grid(True)

if num_channels > 1:

axes[c].set_ylabel(f'Channel {c+1}')

if xlim:

axes[c].set_xlim(xlim)

if ylim:

axes[c].set_ylim(ylim)

figure.suptitle(title)

plt.show(block=False)

def plot_specgram(waveform, sample_rate, title="Spectrogram", xlim=None):

waveform = waveform.numpy()

num_channels, num_frames = waveform.shape

time_axis = torch.arange(0, num_frames) / sample_rate

figure, axes = plt.subplots(num_channels, 1)

if num_channels == 1:

axes = [axes]

for c in range(num_channels):

axes[c].specgram(waveform[c], Fs=sample_rate)

if num_channels > 1:

axes[c].set_ylabel(f'Channel {c+1}')

if xlim:

axes[c].set_xlim(xlim)

figure.suptitle(title)

plt.show(block=False)

def play_audio(waveform, sample_rate):

waveform = waveform.numpy()

num_channels, num_frames = waveform.shape

if num_channels == 1:

display(Audio(waveform[0], rate=sample_rate))

elif num_channels == 2:

display(Audio((waveform[0], waveform[1]), rate=sample_rate))

else:

raise ValueError("Waveform with more than 2 channels are not supported.")

def _get_sample(path, resample=None):

effects = [

["remix", "1"]

]

if resample:

effects.extend([

["lowpass", f"{resample // 2}"],

["rate", f'{resample}'],

])

return torchaudio.sox_effects.apply_effects_file(path, effects=effects)

def get_sample(*, resample=None):

return _get_sample(SAMPLE_WAV_PATH, resample=resample)

def inspect_file(path):

print("-" * 10)

print("Source:", path)

print("-" * 10)

print(f" - File size: {os.path.getsize(path)} bytes")

print(f" - {torchaudio.info(path)}")

查询音频元数据¶

函数 torchaudio.info 获取音频元数据。您可以提供路径类对象或文件类对象。

metadata = torchaudio.info(SAMPLE_WAV_PATH)

print(metadata)

Out:

AudioMetaData(sample_rate=44100, num_frames=109368, num_channels=2, bits_per_sample=16, encoding=PCM_S)

哪里

sample_rate是音频的采样率num_channels是通道数num_frames是每个通道的帧数bits_per_sample是位深度encoding是示例代码格式

encoding 可以取以下值之一:

"PCM_S": 有符号整数线性PCM"PCM_U": 无符号整数线性PCM"PCM_F": 浮点线性PCM"FLAC": Flac, 无损音频编码"ULAW": Mu-law, [维基百科]"ALAW": A-law [wikipedia]"MP3": MP3, MPEG-1 音频第三层"VORBIS": OGG Vorbis [xiph.org]"AMR_NB": 自适应多速率 [维基百科]"AMR_WB": 自适应多速率宽带 [维基百科]"OPUS": Opus [opus-codec.org]"GSM": GSM-FR [维基百科]"UNKNOWN"以上都不是

注意

bits_per_sample可以在具有压缩和/或可变比特率的格式(如 MP3)中变为0。num_frames可以是0的 GSM-FR 格式。

metadata = torchaudio.info(SAMPLE_MP3_PATH)

print(metadata)

metadata = torchaudio.info(SAMPLE_GSM_PATH)

print(metadata)

Out:

AudioMetaData(sample_rate=44100, num_frames=110559, num_channels=2, bits_per_sample=0, encoding=MP3)

AudioMetaData(sample_rate=8000, num_frames=0, num_channels=1, bits_per_sample=0, encoding=GSM)

查询文件样对象¶

info 适用于类文件对象。

print("Source:", SAMPLE_WAV_URL)

with requests.get(SAMPLE_WAV_URL, stream=True) as response:

metadata = torchaudio.info(response.raw)

print(metadata)

Out:

Source: https://pytorch-tutorial-assets.s3.amazonaws.com/steam-train-whistle-daniel_simon.wav

AudioMetaData(sample_rate=44100, num_frames=109368, num_channels=2, bits_per_sample=16, encoding=PCM_S)

注意 当传递一个文件类对象时,info 不会读取所有底层数据;相反,它仅从开头读取部分数据。

因此,对于给定的音频格式,它可能无法检索正确的元数据,包括格式本身。

下面的示例说明了这一点。

使用参数

format来指定输入的音频格式。返回的元数据具有

num_frames = 0

print("Source:", SAMPLE_MP3_URL)

with requests.get(SAMPLE_MP3_URL, stream=True) as response:

metadata = torchaudio.info(response.raw, format="mp3")

print(f"Fetched {response.raw.tell()} bytes.")

print(metadata)

Out:

Source: https://pytorch-tutorial-assets.s3.amazonaws.com/steam-train-whistle-daniel_simon.mp3

Fetched 8192 bytes.

AudioMetaData(sample_rate=44100, num_frames=0, num_channels=2, bits_per_sample=0, encoding=MP3)

将音频数据加载到 Tensor 中¶

要加载音频数据,您可以使用 torchaudio.load。

该函数接受一个类似路径的对象或类似文件的对象作为输入。

返回的值是一个包含波形 (Tensor) 和采样率

(int) 的元组。

默认情况下,生成的张量对象具有 dtype=torch.float32 个维度,且其值范围被归一化在 [-1.0, 1.0] 之间。

有关支持格式的列表,请参阅torchaudio文档。

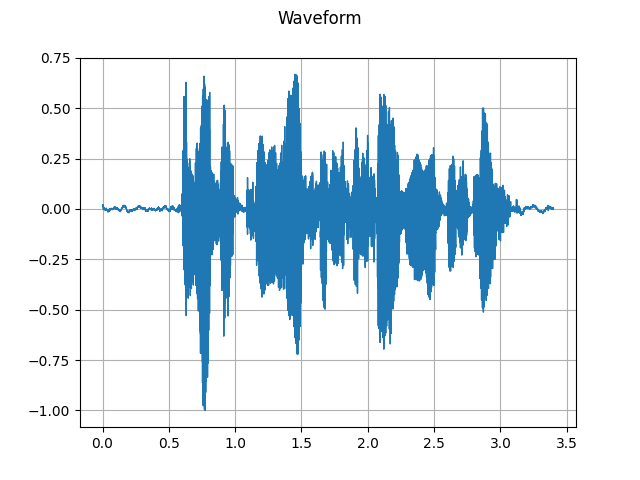

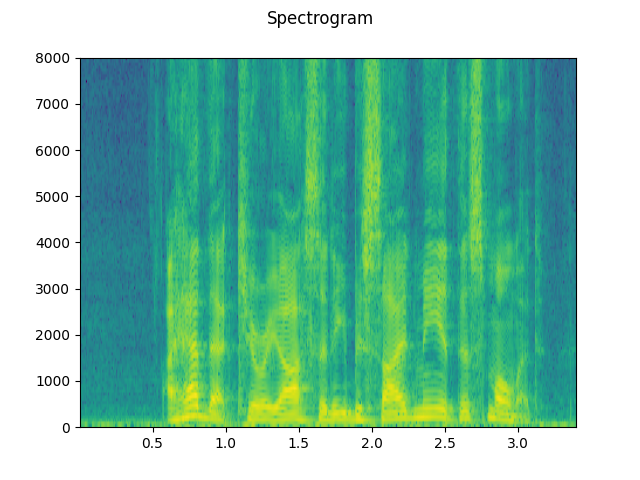

waveform, sample_rate = torchaudio.load(SAMPLE_WAV_SPEECH_PATH)

print_stats(waveform, sample_rate=sample_rate)

plot_waveform(waveform, sample_rate)

plot_specgram(waveform, sample_rate)

play_audio(waveform, sample_rate)

Out:

Sample Rate: 16000

Shape: (1, 54400)

Dtype: torch.float32

- Max: 0.668

- Min: -1.000

- Mean: 0.000

- Std Dev: 0.122

tensor([[0.0183, 0.0180, 0.0180, ..., 0.0018, 0.0019, 0.0032]])

<IPython.lib.display.Audio object>

从类似文件的对象加载¶

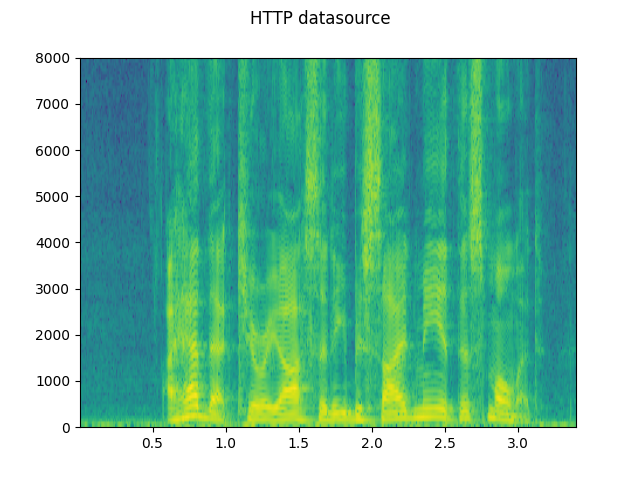

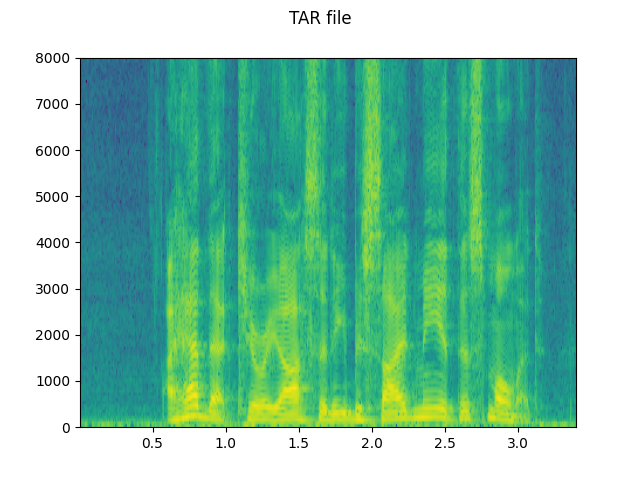

torchaudio的 I/O 函数现在支持类文件对象。这允许从本地文件系统内外的位置获取和解码音频数据。以下示例说明了这一点。

# Load audio data as HTTP request

with requests.get(SAMPLE_WAV_SPEECH_URL, stream=True) as response:

waveform, sample_rate = torchaudio.load(response.raw)

plot_specgram(waveform, sample_rate, title="HTTP datasource")

# Load audio from tar file

with tarfile.open(SAMPLE_TAR_PATH, mode='r') as tarfile_:

fileobj = tarfile_.extractfile(SAMPLE_TAR_ITEM)

waveform, sample_rate = torchaudio.load(fileobj)

plot_specgram(waveform, sample_rate, title="TAR file")

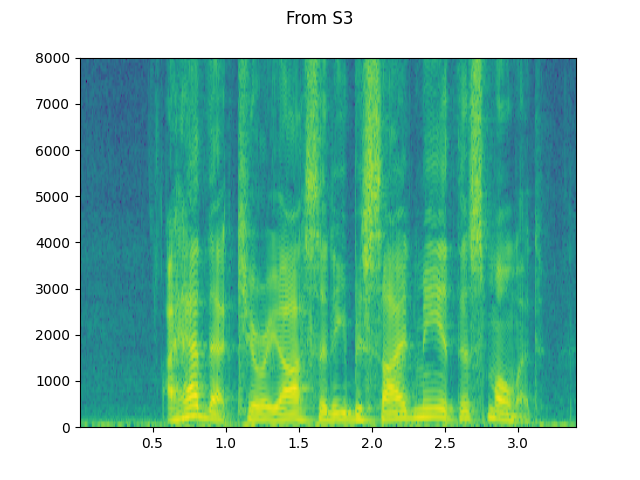

# Load audio from S3

client = boto3.client('s3', config=Config(signature_version=UNSIGNED))

response = client.get_object(Bucket=S3_BUCKET, Key=S3_KEY)

waveform, sample_rate = torchaudio.load(response['Body'])

plot_specgram(waveform, sample_rate, title="From S3")

切片技巧¶

提供 num_frames 和 frame_offset 参数会将解码限制在输入的相应段落中。

使用普通的张量切片方法(即 waveform[:, frame_offset:frame_offset+num_frames])也可以达到相同的结果。然而,提供 num_frames 和 frame_offset 参数会更高效。

这是因为该函数在解码完请求的帧后将结束数据采集和解码。这在网络传输音频数据时具有优势,因为一旦获取到所需的数据量,数据传输就会立即停止。

以下示例说明了这一点。

# Illustration of two different decoding methods.

# The first one will fetch all the data and decode them, while

# the second one will stop fetching data once it completes decoding.

# The resulting waveforms are identical.

frame_offset, num_frames = 16000, 16000 # Fetch and decode the 1 - 2 seconds

print("Fetching all the data...")

with requests.get(SAMPLE_WAV_SPEECH_URL, stream=True) as response:

waveform1, sample_rate1 = torchaudio.load(response.raw)

waveform1 = waveform1[:, frame_offset:frame_offset+num_frames]

print(f" - Fetched {response.raw.tell()} bytes")

print("Fetching until the requested frames are available...")

with requests.get(SAMPLE_WAV_SPEECH_URL, stream=True) as response:

waveform2, sample_rate2 = torchaudio.load(

response.raw, frame_offset=frame_offset, num_frames=num_frames)

print(f" - Fetched {response.raw.tell()} bytes")

print("Checking the resulting waveform ... ", end="")

assert (waveform1 == waveform2).all()

print("matched!")

Out:

Fetching all the data...

- Fetched 108844 bytes

Fetching until the requested frames are available...

- Fetched 65580 bytes

Checking the resulting waveform ... matched!

保存音频到文件¶

要将音频数据保存为常见应用程序可解读的格式,

您可以使用 torchaudio.save。

该函数接受一个类似路径的对象或类似文件的对象。

当传递一个类似文件的对象时,你也需要提供参数 format

以便函数知道应该使用哪种格式。在

使用类似路径的对象的情况下,函数将从

扩展名推断格式。如果你正在保存到没有扩展名的文件中,你需要

提供参数 format。

当保存WAV格式的数据时,float32 Tensor 的默认编码是 32 位浮点型 PCM。你可以提供参数 encoding 和

bits_per_sample 来更改此行为。例如,要以 16 位有符号整数 PCM 格式保存数据,你可以执行以下操作。

注意 以较低位深度编码保存数据会减小生成的文件体积,但也会降低精度。

waveform, sample_rate = get_sample()

print_stats(waveform, sample_rate=sample_rate)

# Save without any encoding option.

# The function will pick up the encoding which

# the provided data fit

path = f"{_SAMPLE_DIR}/save_example_default.wav"

torchaudio.save(path, waveform, sample_rate)

inspect_file(path)

# Save as 16-bit signed integer Linear PCM

# The resulting file occupies half the storage but loses precision

path = f"{_SAMPLE_DIR}/save_example_PCM_S16.wav"

torchaudio.save(

path, waveform, sample_rate,

encoding="PCM_S", bits_per_sample=16)

inspect_file(path)

Out:

Sample Rate: 44100

Shape: (1, 109368)

Dtype: torch.float32

- Max: 0.508

- Min: -0.449

- Mean: -0.000

- Std Dev: 0.122

tensor([[0.0027, 0.0063, 0.0092, ..., 0.0032, 0.0047, 0.0052]])

----------

Source: _assets/save_example_default.wav

----------

- File size: 437530 bytes

- AudioMetaData(sample_rate=44100, num_frames=109368, num_channels=1, bits_per_sample=32, encoding=PCM_F)

----------

Source: _assets/save_example_PCM_S16.wav

----------

- File size: 218780 bytes

- AudioMetaData(sample_rate=44100, num_frames=109368, num_channels=1, bits_per_sample=16, encoding=PCM_S)

torchaudio.save 还可以处理其他格式。举几个例子:

waveform, sample_rate = get_sample(resample=8000)

formats = [

"mp3",

"flac",

"vorbis",

"sph",

"amb",

"amr-nb",

"gsm",

]

for format in formats:

path = f"{_SAMPLE_DIR}/save_example.{format}"

torchaudio.save(path, waveform, sample_rate, format=format)

inspect_file(path)

Out:

----------

Source: _assets/save_example.mp3

----------

- File size: 2664 bytes

- AudioMetaData(sample_rate=8000, num_frames=21312, num_channels=1, bits_per_sample=0, encoding=MP3)

----------

Source: _assets/save_example.flac

----------

- File size: 47315 bytes

- AudioMetaData(sample_rate=8000, num_frames=19840, num_channels=1, bits_per_sample=24, encoding=FLAC)

----------

Source: _assets/save_example.vorbis

----------

- File size: 9967 bytes

- AudioMetaData(sample_rate=8000, num_frames=19840, num_channels=1, bits_per_sample=0, encoding=VORBIS)

----------

Source: _assets/save_example.sph

----------

- File size: 80384 bytes

- AudioMetaData(sample_rate=8000, num_frames=19840, num_channels=1, bits_per_sample=32, encoding=PCM_S)

----------

Source: _assets/save_example.amb

----------

- File size: 79418 bytes

- AudioMetaData(sample_rate=8000, num_frames=19840, num_channels=1, bits_per_sample=32, encoding=PCM_F)

----------

Source: _assets/save_example.amr-nb

----------

- File size: 1618 bytes

- AudioMetaData(sample_rate=8000, num_frames=19840, num_channels=1, bits_per_sample=0, encoding=AMR_NB)

----------

Source: _assets/save_example.gsm

----------

- File size: 4092 bytes

- AudioMetaData(sample_rate=8000, num_frames=0, num_channels=1, bits_per_sample=0, encoding=GSM)

保存到文件对象¶

与其它I/O函数类似,你可以将音频保存到文件类似的对象中。当保存到文件类似的对象时,需要参数 format。

waveform, sample_rate = get_sample()

# Saving to bytes buffer

buffer_ = io.BytesIO()

torchaudio.save(buffer_, waveform, sample_rate, format="wav")

buffer_.seek(0)

print(buffer_.read(16))

Out:

b'RIFF\x12\xad\x06\x00WAVEfmt '

脚本的总运行时间: ( 0 分钟 2.110 秒)