注意

点击 这里 下载完整示例代码

强化学习(PPO)与TorchRL教程¶

创建日期: 2023年3月15日 | 最后更新日期: 2024年5月16日 | 最后验证日期: 2024年11月5日

作者: Vincent Moens

本教程演示了如何使用PyTorch和torchrl训练参数化策略网络,以解决来自OpenAI-Gym/Farama-Gymnasium控制库的倒立摆任务。

倒立摆¶

核心收获:

如何在TorchRL中创建一个环境、转换其输出以及从该环境中收集数据;

如何让您的类彼此交流使用

TensorDict;使用TorchRL构建训练循环的基本知识:

如何计算策略梯度方法中的优势信号;

如何使用概率神经网络创建一个随机策略;

如何创建一个动态重放缓冲区并在其中无重复地采样。

我们将介绍 TorchRL 的六个关键组成部分:

如果您在 Google Colab 中运行此代码,请确保安装以下依赖项:

!pip3 install torchrl

!pip3 install gym[mujoco]

!pip3 install tqdm

Proximal Policy Optimization (PPO) 是一种策略梯度算法,其中收集一批数据并直接用于训练策略以最大化在某些接近性约束下的预期回报。你可以将其视为 REINFORCE 的复杂版本,REINFORCE 是基础的策略优化算法。更多信息,请参阅Proximal Policy Optimization Algorithms 论文。

PPO 通常被视为一种快速且高效的在线、策略一致的强化学习算法方法。TorchRL 提供了一个损失模块,可以为你完成所有工作,这样你就可以依赖这个实现,专注于解决问题而不是每次训练策略时都重新发明轮子。

为了完整性,这里提供一个简要的损失计算概述,尽管这个过程由我们ClipPPOLoss模块处理——算法如下:

1. 我们将通过在环境中执行给定数量的步骤来采样一批数据。

2. 然后,我们将使用此批次的随机子样本进行给定数量的优化步骤,并采用裁剪版本的REINFORCE损失。

3. 裁剪将对我们的损失施加一个悲观的上限:较低回报的估计值将优先于较高回报的估计值。

损失的确切公式为:

那个损失由两部分组成:在最小操作符的第一部分中,我们简单地计算了一个带有重要性加权的REINFORCE损失(例如,已经修正了当前策略配置滞后于数据收集时使用的配置的REINFORCE损失)。最小操作符的第二部分是一个类似的损失,在这个损失中,当比率超过或低于给定的一对阈值时,我们对其进行裁剪。

这种损失确保了无论优势是正数还是负数,都会阻止那些会导致显著偏离先前配置的策略更新。

本教程结构如下:

首先,我们将定义一套用于训练的超参数。

接下来,我们将重点使用TorchRL的包装器和转换器来创建我们的环境,或者模拟器。

接下来,我们将设计策略网络和价值模型,这些模块对于损失函数来说是必不可少的。我们将使用这些模块来配置我们的损失模块。

接下来,我们将创建回放缓冲区和数据加载器。

最后,我们将运行训练循环并分析结果。

在整个教程中,我们将使用 tensordict 库。

TensorDict 是 TorchRL 的通用语言:它帮助我们抽象出模块读取和写入的数据,并更多地关注算法本身而不是具体的数据描述。

import warnings

warnings.filterwarnings("ignore")

from torch import multiprocessing

from collections import defaultdict

import matplotlib.pyplot as plt

import torch

from tensordict.nn import TensorDictModule

from tensordict.nn.distributions import NormalParamExtractor

from torch import nn

from torchrl.collectors import SyncDataCollector

from torchrl.data.replay_buffers import ReplayBuffer

from torchrl.data.replay_buffers.samplers import SamplerWithoutReplacement

from torchrl.data.replay_buffers.storages import LazyTensorStorage

from torchrl.envs import (Compose, DoubleToFloat, ObservationNorm, StepCounter,

TransformedEnv)

from torchrl.envs.libs.gym import GymEnv

from torchrl.envs.utils import check_env_specs, ExplorationType, set_exploration_type

from torchrl.modules import ProbabilisticActor, TanhNormal, ValueOperator

from torchrl.objectives import ClipPPOLoss

from torchrl.objectives.value import GAE

from tqdm import tqdm

定义超参数¶

我们为算法设置超参数。根据可用资源,可以选择在GPU或其他设备上执行策略。

frame_skip 将控制单个动作执行多少帧。其余用于计数帧的参数必须为此值进行调整(因为一个环境步骤实际上会返回 frame_skip 帧)。

is_fork = multiprocessing.get_start_method() == "fork"

device = (

torch.device(0)

if torch.cuda.is_available() and not is_fork

else torch.device("cpu")

)

num_cells = 256 # number of cells in each layer i.e. output dim.

lr = 3e-4

max_grad_norm = 1.0

数据收集参数¶

当收集数据时,我们可以通过定义一个 frames_per_batch 参数来选择每个批次的大小。我们还将定义可以使用的帧数(例如,与模拟器交互的次数)。一般来说,RL 算法的目标是在环境交互方面尽可能快地学会解决任务:交互次数 total_frames 越低越好。

frames_per_batch = 1000

# For a complete training, bring the number of frames up to 1M

total_frames = 50_000

PPO参数¶

在每次数据收集(或批次收集)中,我们将在一个嵌套的训练循环中对优化进行一定数量的 轮次。这里,sub_batch_size 和上面的 frames_per_batch 是不同的:请回忆一下我们正在处理的是来自我们的收集器的一批数据,其大小由 frames_per_batch 定义,并且在内部训练循环中我们会进一步将其分成更小的子批次。这些子批次的大小由 sub_batch_size 控制。

sub_batch_size = 64 # cardinality of the sub-samples gathered from the current data in the inner loop

num_epochs = 10 # optimization steps per batch of data collected

clip_epsilon = (

0.2 # clip value for PPO loss: see the equation in the intro for more context.

)

gamma = 0.99

lmbda = 0.95

entropy_eps = 1e-4

定义一个环境¶

在强化学习(RL)中,环境通常是指模拟器或控制系统。各种库提供了强化学习的模拟环境,包括Gymnasium(以前的OpenAI Gym)、DeepMind控制套件以及其他许多库。 作为一个通用库,TorchRL的目标是提供一个可互换的接口,以便您可以轻松地切换一个环境为另一个环境。例如,创建一个包装的gym环境只需几行字符:

在这段代码中需要注意几件事:首先,我们通过调用GymEnv包装器创建了环境。如果传递了额外的关键字参数,它们将会被传送到gym.make方法中,从而覆盖最常见的环境构建命令。

或者,也可以直接使用gym.make(env_name, **kwargs)创建一个gym环境,并将其封装在GymWrapper类中。

Also the device参数:对于gym,这仅控制输入动作和观察状态将被存储的设备,但执行始终在CPU上进行。这样做的原因是gym不支持设备上的执行,除非另有指定。对于其他库,我们对执行设备有控制权,并尽可能在存储和执行后端方面保持一致。

变换¶

我们将对环境添加一些转换以准备数据供策略使用。在Gym中,这通常通过包装器来实现。TorchRL采取了不同的方法,更类似于其他PyTorch领域库,通过使用转换来实现。要在环境中添加转换,只需将其包裹在一个TransformedEnv实例中,并将其与转换序列连接起来。转换后的环境将继承所包裹环境的设备和元数据,并根据其包含的转换序列对其进行相应的转换。

归一化¶

首先进行编码的是一个归一化变换。 一般来说,最好让数据大致符合单位正态分布:为了达到这一点,我们将在环境中运行一定数量的随机步骤,并计算这些观察值的汇总统计。

我们将附加两个其他转换:DoubleToFloat转换将会把双精度数值转换为单精度数字,以便被策略读取。StepCounter转换将会用于计算环境终止前的步骤数。我们将使用这个指标作为性能的辅助衡量标准。

正如我们稍后将看到的,TorchRL 的许多类依赖于 TensorDict

来通信。你可以将其视为一个带有额外张量功能的 Python 字典。实际上,这意味着我们将要使用的许多模块需要被告知读取哪个键 (in_keys) 和写入哪个键 (out_keys) 在它们将要接收的 tensordict 中。通常情况下,如果 out_keys

省略了,则假设 in_keys 条目将被就地更新。对于我们的转换,我们唯一感兴趣的条目称为 "observation",我们的转换层将被告知只修改这个条目:

env = TransformedEnv(

base_env,

Compose(

# normalize observations

ObservationNorm(in_keys=["observation"]),

DoubleToFloat(),

StepCounter(),

),

)

正如您可能已经注意到的,我们创建了一个归一化层,但没有设置其归一化参数。为了做到这一点,ObservationNorm可以自动收集我们环境的摘要统计信息:

env.transform[0].init_stats(num_iter=1000, reduce_dim=0, cat_dim=0)

The ObservationNorm 转换现在已经被填充了一个位置和一个用于标准化数据的比例。

让我们对总结统计量的形状进行一个小的合理性检查:

print("normalization constant shape:", env.transform[0].loc.shape)

normalization constant shape: torch.Size([11])

一个环境不仅由其模拟器和转换器定义,还由一系列描述在执行过程中可以预期到的元数据定义。

为了提高效率,TorchRL 在环境规范方面要求非常严格,但你可以轻松检查你的环境规范是否合适。

在我们的示例中,GymWrapper 和 GymEnv 都继承自它,并已经为你设置好了适当的环境规范,因此你无需担心这个问题。

然而,让我们通过查看其规范来了解我们转换后的环境的一个具体例子。

有三个规范需要查看:observation_spec 定义了在执行动作时对环境的期望,

reward_spec 指示奖励范围,最后是 input_spec(包含 action_spec),

它代表了环境执行单一步骤所需的一切。

print("observation_spec:", env.observation_spec)

print("reward_spec:", env.reward_spec)

print("input_spec:", env.input_spec)

print("action_spec (as defined by input_spec):", env.action_spec)

observation_spec: CompositeSpec(

observation: UnboundedContinuousTensorSpec(

shape=torch.Size([11]),

space=None,

device=cpu,

dtype=torch.float32,

domain=continuous),

step_count: BoundedTensorSpec(

shape=torch.Size([1]),

space=ContinuousBox(

low=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, contiguous=True),

high=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, contiguous=True)),

device=cpu,

dtype=torch.int64,

domain=continuous),

device=cpu,

shape=torch.Size([]))

reward_spec: UnboundedContinuousTensorSpec(

shape=torch.Size([1]),

space=ContinuousBox(

low=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True),

high=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True)),

device=cpu,

dtype=torch.float32,

domain=continuous)

input_spec: CompositeSpec(

full_state_spec: CompositeSpec(

step_count: BoundedTensorSpec(

shape=torch.Size([1]),

space=ContinuousBox(

low=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, contiguous=True),

high=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, contiguous=True)),

device=cpu,

dtype=torch.int64,

domain=continuous),

device=cpu,

shape=torch.Size([])),

full_action_spec: CompositeSpec(

action: BoundedTensorSpec(

shape=torch.Size([1]),

space=ContinuousBox(

low=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True),

high=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True)),

device=cpu,

dtype=torch.float32,

domain=continuous),

device=cpu,

shape=torch.Size([])),

device=cpu,

shape=torch.Size([]))

action_spec (as defined by input_spec): BoundedTensorSpec(

shape=torch.Size([1]),

space=ContinuousBox(

low=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True),

high=Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, contiguous=True)),

device=cpu,

dtype=torch.float32,

domain=continuous)

the check_env_specs() 函数运行一个小规模滚动并将其输出与环境规范进行比较。如果没有错误抛出,我们可以确信规范已经正确定义:

check_env_specs(env)

为了好玩,让我们看看一个简单的随机滚动是什么样的。你可以 调用 env.rollout(n_steps) 并获取环境输入和输出的大致概览。动作将自动从动作规范领域中抽取, 所以你不需要担心设计一个随机采样器。

通常,在每一步中,一个RL环境接收一个动作作为输入,并输出一个观察值、一个奖励和一个结束状态。这个观察值可能是复合的,意味着它可以由多个张量组成。这不会成为TorchRL的问题,因为整个观察值集会自动打包在输出TensorDict中。执行了一次回放(例如,给定步数的一系列环境步骤和随机动作生成)后,我们将获得一个形状与轨迹长度匹配的TensorDict实例:

rollout = env.rollout(3)

print("rollout of three steps:", rollout)

print("Shape of the rollout TensorDict:", rollout.batch_size)

rollout of three steps: TensorDict(

fields={

action: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.float32, is_shared=False),

done: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False),

next: TensorDict(

fields={

done: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False),

observation: Tensor(shape=torch.Size([3, 11]), device=cpu, dtype=torch.float32, is_shared=False),

reward: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.float32, is_shared=False),

step_count: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.int64, is_shared=False),

terminated: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False),

truncated: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False)},

batch_size=torch.Size([3]),

device=cpu,

is_shared=False),

observation: Tensor(shape=torch.Size([3, 11]), device=cpu, dtype=torch.float32, is_shared=False),

step_count: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.int64, is_shared=False),

terminated: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False),

truncated: Tensor(shape=torch.Size([3, 1]), device=cpu, dtype=torch.bool, is_shared=False)},

batch_size=torch.Size([3]),

device=cpu,

is_shared=False)

Shape of the rollout TensorDict: torch.Size([3])

我们的发布数据形状为 torch.Size([3]),这与我们运行的步数相符。"next" 进入点指向当前步骤之后的数据。

在大多数情况下,时间 t 的 "next" 数据与 t+1 处的数据匹配,但如果使用了一些特定的变换(例如多步变换),这可能不成立。

政策¶

PPO 使用随机策略来处理探索问题。这意味着我们的神经网络需要输出分布的参数,而不是所采取动作的单一值。

由于数据是连续的,我们使用Tanh-Normal分布来尊重动作空间边界。TorchRL提供了这种分布,我们需要关注的是构建一个神经网络,使其输出策略所需的正确参数数量(位置或均值,以及尺度):

这里提到的唯一额外难度是将我们的输出分成两部分相等的部分,并将第二部分映射到严格正的空间。

我们设计策略分为三个步骤:

定义一个神经网络

D_obs->2 * D_action。确实,我们的loc(mu)和scale(sigma)都具有维度D_action。Append a

NormalParamExtractor以提取一个位置和尺度(例如,将输入分成两部分并对方差参数进行正变换)。创建一个概率模型

TensorDictModule,使其能够生成这种分布并从中抽样。

actor_net = nn.Sequential(

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(2 * env.action_spec.shape[-1], device=device),

NormalParamExtractor(),

)

为了使策略能够通过tensordict数据载体与环境进行“对话”,我们将nn.Module封装在一个TensorDictModule中。这个类将简单地读取它所提供的in_keys,并将输出写入到注册的out_keys位置。

policy_module = TensorDictModule(

actor_net, in_keys=["observation"], out_keys=["loc", "scale"]

)

我们现在需要构建一个分布,基于我们正态分布的位置和尺度。为此,我们指示

ProbabilisticActor

类使用位置和尺度参数构建一个TanhNormal。我们还提供了该分布的最小值和最大值,这些值是从环境规范中获取的。

The name of the in_keys (and hence the name of the out_keys from

the TensorDictModule above) 不能设置为任何用户可以随意选择的值,因为 TanhNormal 分布构造函数会期望 loc 和 scale 关键字参数。不过,

ProbabilisticActor 也接受

Dict[str, str] 类型的 in_keys,其中键值对表示应使用什么 in_key 字符串来替换每个关键字参数。

policy_module = ProbabilisticActor(

module=policy_module,

spec=env.action_spec,

in_keys=["loc", "scale"],

distribution_class=TanhNormal,

distribution_kwargs={

"min": env.action_spec.space.low,

"max": env.action_spec.space.high,

},

return_log_prob=True,

# we'll need the log-prob for the numerator of the importance weights

)

价值网络¶

价值网络是PPO算法中的一个关键组成部分,尽管它在推理阶段不会被使用。这个模块会读取观察值,并返回后续轨迹的折现回报估计值。这使得我们可以通过依赖于训练过程中学习到的一些即时效用估计来减轻学习负担。我们的价值网络与策略网络具有相同的基本结构,但为了简化,我们为其分配了一组独立的参数。

value_net = nn.Sequential(

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(num_cells, device=device),

nn.Tanh(),

nn.LazyLinear(1, device=device),

)

value_module = ValueOperator(

module=value_net,

in_keys=["observation"],

)

让我们尝试一下我们的策略和价值模块。正如我们之前所说,使用

TensorDictModule 可以直接读取环境的输出并运行这些模块,因为它们知道要读取什么信息以及写入的位置:

print("Running policy:", policy_module(env.reset()))

print("Running value:", value_module(env.reset()))

Running policy: TensorDict(

fields={

action: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, is_shared=False),

done: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False),

loc: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, is_shared=False),

observation: Tensor(shape=torch.Size([11]), device=cpu, dtype=torch.float32, is_shared=False),

sample_log_prob: Tensor(shape=torch.Size([]), device=cpu, dtype=torch.float32, is_shared=False),

scale: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, is_shared=False),

step_count: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, is_shared=False),

terminated: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False),

truncated: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False)},

batch_size=torch.Size([]),

device=cpu,

is_shared=False)

Running value: TensorDict(

fields={

done: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False),

observation: Tensor(shape=torch.Size([11]), device=cpu, dtype=torch.float32, is_shared=False),

state_value: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.float32, is_shared=False),

step_count: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.int64, is_shared=False),

terminated: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False),

truncated: Tensor(shape=torch.Size([1]), device=cpu, dtype=torch.bool, is_shared=False)},

batch_size=torch.Size([]),

device=cpu,

is_shared=False)

数据收集器¶

TorchRL 提供了一组 数据收集类。 简而言之,这些类执行三个操作:重置环境, 根据最新的观察计算动作,在环境中执行一个步骤, 并重复最后两个步骤直到环境发出停止信号(或达到完成状态)。

它们允许你在每次迭代中控制收集多少帧(通过frames_per_batch参数),

何时重置环境(通过max_frames_per_traj参数),

在哪个device上执行策略等。它们还设计为能够高效地与批处理和多进程环境配合使用。

最简单的数据收集器是 SyncDataCollector:

它是一个可以用于获取给定长度的数据批次的迭代器,并且

在收集到总帧数(total_frames)后会停止。

其他数据收集器(MultiSyncDataCollector 和

MultiaSyncDataCollector)将在一组多进程工作者上以同步和异步的方式执行相同的操作。

在之前的策略和环境中,数据收集器将返回

TensorDict 个实例,并且元素总数将匹配

frames_per_batch。使用

TensorDict 将数据传递给训练循环可以让你编写完全不知情于实际展开内容具体细节的数据加载管道。

collector = SyncDataCollector(

env,

policy_module,

frames_per_batch=frames_per_batch,

total_frames=total_frames,

split_trajs=False,

device=device,

)

回放缓冲区¶

回放缓冲区是离策算法RL算法中常见的组成部分。 在在线策略上下文中,每当收集一批数据时,回放缓冲区就会被重新填充,并且其数据会在一定数量的轮次中重复被消费。

TorchRL 的重放缓冲区使用一个通用容器构建,

ReplayBuffer 其接受缓冲区组件作为参数:存储、写入器、采样器和可能的一些转换。

只有存储(它表示重放缓冲区容量)是必需的。

我们还指定了一个无重复的采样器,以避免在一个 epoch 中多次采样相同的项目。

对于 PPO 使用重放缓冲区并不是强制性的,我们可以简单地

从收集的批次中采样子批次,但使用这些类使我们能够以可再现的方式构建内部训练循环。

replay_buffer = ReplayBuffer(

storage=LazyTensorStorage(max_size=frames_per_batch),

sampler=SamplerWithoutReplacement(),

)

损失函数¶

PPO损失可以直接从TorchRL导入以方便使用 ClipPPOLoss 类。这是利用PPO最简单的方式:它隐藏了PPO背后的数学运算和相关的控制流。

PPPO 需要计算一些“优势估计”。简单来说,优势是一个反映期望回报值的数值,同时处理偏差/方差权衡问题。

为了计算优势,只需要 (1) 构建优势模块,利用我们的价值算子,和 (2) 在每个 epoch 之前将每批数据传递给它。

GAE 模块将会用新的 tensordict、"advantage" 和 "value_target" 更新输入。

"value_target" 是一个无梯度张量,代表了价值网络应该用输入观察值表示的经验价值。

这两个都将被 ClipPPOLoss 使用来返回策略和价值损失。

advantage_module = GAE(

gamma=gamma, lmbda=lmbda, value_network=value_module, average_gae=True

)

loss_module = ClipPPOLoss(

actor_network=policy_module,

critic_network=value_module,

clip_epsilon=clip_epsilon,

entropy_bonus=bool(entropy_eps),

entropy_coef=entropy_eps,

# these keys match by default but we set this for completeness

critic_coef=1.0,

loss_critic_type="smooth_l1",

)

optim = torch.optim.Adam(loss_module.parameters(), lr)

scheduler = torch.optim.lr_scheduler.CosineAnnealingLR(

optim, total_frames // frames_per_batch, 0.0

)

训练循环¶

我们现在有了编写训练循环所需的所有部分。 步骤包括:

收集数据

计算优势

遍历收集的数据以计算损失值

反向传播

优化

重复

重复

重复

logs = defaultdict(list)

pbar = tqdm(total=total_frames)

eval_str = ""

# We iterate over the collector until it reaches the total number of frames it was

# designed to collect:

for i, tensordict_data in enumerate(collector):

# we now have a batch of data to work with. Let's learn something from it.

for _ in range(num_epochs):

# We'll need an "advantage" signal to make PPO work.

# We re-compute it at each epoch as its value depends on the value

# network which is updated in the inner loop.

advantage_module(tensordict_data)

data_view = tensordict_data.reshape(-1)

replay_buffer.extend(data_view.cpu())

for _ in range(frames_per_batch // sub_batch_size):

subdata = replay_buffer.sample(sub_batch_size)

loss_vals = loss_module(subdata.to(device))

loss_value = (

loss_vals["loss_objective"]

+ loss_vals["loss_critic"]

+ loss_vals["loss_entropy"]

)

# Optimization: backward, grad clipping and optimization step

loss_value.backward()

# this is not strictly mandatory but it's good practice to keep

# your gradient norm bounded

torch.nn.utils.clip_grad_norm_(loss_module.parameters(), max_grad_norm)

optim.step()

optim.zero_grad()

logs["reward"].append(tensordict_data["next", "reward"].mean().item())

pbar.update(tensordict_data.numel())

cum_reward_str = (

f"average reward={logs['reward'][-1]: 4.4f} (init={logs['reward'][0]: 4.4f})"

)

logs["step_count"].append(tensordict_data["step_count"].max().item())

stepcount_str = f"step count (max): {logs['step_count'][-1]}"

logs["lr"].append(optim.param_groups[0]["lr"])

lr_str = f"lr policy: {logs['lr'][-1]: 4.4f}"

if i % 10 == 0:

# We evaluate the policy once every 10 batches of data.

# Evaluation is rather simple: execute the policy without exploration

# (take the expected value of the action distribution) for a given

# number of steps (1000, which is our ``env`` horizon).

# The ``rollout`` method of the ``env`` can take a policy as argument:

# it will then execute this policy at each step.

with set_exploration_type(ExplorationType.MEAN), torch.no_grad():

# execute a rollout with the trained policy

eval_rollout = env.rollout(1000, policy_module)

logs["eval reward"].append(eval_rollout["next", "reward"].mean().item())

logs["eval reward (sum)"].append(

eval_rollout["next", "reward"].sum().item()

)

logs["eval step_count"].append(eval_rollout["step_count"].max().item())

eval_str = (

f"eval cumulative reward: {logs['eval reward (sum)'][-1]: 4.4f} "

f"(init: {logs['eval reward (sum)'][0]: 4.4f}), "

f"eval step-count: {logs['eval step_count'][-1]}"

)

del eval_rollout

pbar.set_description(", ".join([eval_str, cum_reward_str, stepcount_str, lr_str]))

# We're also using a learning rate scheduler. Like the gradient clipping,

# this is a nice-to-have but nothing necessary for PPO to work.

scheduler.step()

0%| | 0/50000 [00:00<?, ?it/s]

2%|2 | 1000/50000 [00:03<03:10, 256.79it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.0998 (init= 9.0998), step count (max): 16, lr policy: 0.0003: 2%|2 | 1000/50000 [00:03<03:10, 256.79it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.0998 (init= 9.0998), step count (max): 16, lr policy: 0.0003: 4%|4 | 2000/50000 [00:07<03:04, 259.51it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1175 (init= 9.0998), step count (max): 14, lr policy: 0.0003: 4%|4 | 2000/50000 [00:07<03:04, 259.51it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1175 (init= 9.0998), step count (max): 14, lr policy: 0.0003: 6%|6 | 3000/50000 [00:11<02:59, 261.82it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1509 (init= 9.0998), step count (max): 14, lr policy: 0.0003: 6%|6 | 3000/50000 [00:11<02:59, 261.82it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1509 (init= 9.0998), step count (max): 14, lr policy: 0.0003: 8%|8 | 4000/50000 [00:15<02:54, 263.16it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1931 (init= 9.0998), step count (max): 22, lr policy: 0.0003: 8%|8 | 4000/50000 [00:15<02:54, 263.16it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.1931 (init= 9.0998), step count (max): 22, lr policy: 0.0003: 10%|# | 5000/50000 [00:19<02:50, 264.70it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2155 (init= 9.0998), step count (max): 27, lr policy: 0.0003: 10%|# | 5000/50000 [00:19<02:50, 264.70it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2155 (init= 9.0998), step count (max): 27, lr policy: 0.0003: 12%|#2 | 6000/50000 [00:22<02:45, 265.97it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2189 (init= 9.0998), step count (max): 25, lr policy: 0.0003: 12%|#2 | 6000/50000 [00:22<02:45, 265.97it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2189 (init= 9.0998), step count (max): 25, lr policy: 0.0003: 14%|#4 | 7000/50000 [00:26<02:41, 266.81it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2371 (init= 9.0998), step count (max): 47, lr policy: 0.0003: 14%|#4 | 7000/50000 [00:26<02:41, 266.81it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2371 (init= 9.0998), step count (max): 47, lr policy: 0.0003: 16%|#6 | 8000/50000 [00:30<02:37, 267.35it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2277 (init= 9.0998), step count (max): 36, lr policy: 0.0003: 16%|#6 | 8000/50000 [00:30<02:37, 267.35it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2277 (init= 9.0998), step count (max): 36, lr policy: 0.0003: 18%|#8 | 9000/50000 [00:33<02:32, 268.43it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2517 (init= 9.0998), step count (max): 41, lr policy: 0.0003: 18%|#8 | 9000/50000 [00:33<02:32, 268.43it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2517 (init= 9.0998), step count (max): 41, lr policy: 0.0003: 20%|## | 10000/50000 [00:37<02:32, 262.98it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2600 (init= 9.0998), step count (max): 41, lr policy: 0.0003: 20%|## | 10000/50000 [00:37<02:32, 262.98it/s]

eval cumulative reward: 101.6955 (init: 101.6955), eval step-count: 10, average reward= 9.2600 (init= 9.0998), step count (max): 41, lr policy: 0.0003: 22%|##2 | 11000/50000 [00:41<02:26, 265.59it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2575 (init= 9.0998), step count (max): 38, lr policy: 0.0003: 22%|##2 | 11000/50000 [00:41<02:26, 265.59it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2575 (init= 9.0998), step count (max): 38, lr policy: 0.0003: 24%|##4 | 12000/50000 [00:45<02:23, 265.18it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2730 (init= 9.0998), step count (max): 56, lr policy: 0.0003: 24%|##4 | 12000/50000 [00:45<02:23, 265.18it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2730 (init= 9.0998), step count (max): 56, lr policy: 0.0003: 26%|##6 | 13000/50000 [00:48<02:18, 267.12it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2719 (init= 9.0998), step count (max): 55, lr policy: 0.0003: 26%|##6 | 13000/50000 [00:48<02:18, 267.12it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2719 (init= 9.0998), step count (max): 55, lr policy: 0.0003: 28%|##8 | 14000/50000 [00:52<02:14, 268.62it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2725 (init= 9.0998), step count (max): 102, lr policy: 0.0003: 28%|##8 | 14000/50000 [00:52<02:14, 268.62it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2725 (init= 9.0998), step count (max): 102, lr policy: 0.0003: 30%|### | 15000/50000 [00:56<02:09, 269.74it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2774 (init= 9.0998), step count (max): 95, lr policy: 0.0002: 30%|### | 15000/50000 [00:56<02:09, 269.74it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2774 (init= 9.0998), step count (max): 95, lr policy: 0.0002: 32%|###2 | 16000/50000 [01:00<02:05, 270.42it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2724 (init= 9.0998), step count (max): 59, lr policy: 0.0002: 32%|###2 | 16000/50000 [01:00<02:05, 270.42it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2724 (init= 9.0998), step count (max): 59, lr policy: 0.0002: 34%|###4 | 17000/50000 [01:03<02:01, 271.23it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2809 (init= 9.0998), step count (max): 89, lr policy: 0.0002: 34%|###4 | 17000/50000 [01:03<02:01, 271.23it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2809 (init= 9.0998), step count (max): 89, lr policy: 0.0002: 36%|###6 | 18000/50000 [01:07<01:57, 271.33it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2828 (init= 9.0998), step count (max): 83, lr policy: 0.0002: 36%|###6 | 18000/50000 [01:07<01:57, 271.33it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2828 (init= 9.0998), step count (max): 83, lr policy: 0.0002: 38%|###8 | 19000/50000 [01:11<01:54, 271.37it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2828 (init= 9.0998), step count (max): 69, lr policy: 0.0002: 38%|###8 | 19000/50000 [01:11<01:54, 271.37it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2828 (init= 9.0998), step count (max): 69, lr policy: 0.0002: 40%|#### | 20000/50000 [01:14<01:50, 270.62it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2765 (init= 9.0998), step count (max): 66, lr policy: 0.0002: 40%|#### | 20000/50000 [01:14<01:50, 270.62it/s]

eval cumulative reward: 437.4563 (init: 101.6955), eval step-count: 46, average reward= 9.2765 (init= 9.0998), step count (max): 66, lr policy: 0.0002: 42%|####2 | 21000/50000 [01:18<01:46, 271.33it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2970 (init= 9.0998), step count (max): 121, lr policy: 0.0002: 42%|####2 | 21000/50000 [01:18<01:46, 271.33it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2970 (init= 9.0998), step count (max): 121, lr policy: 0.0002: 44%|####4 | 22000/50000 [01:22<01:44, 267.08it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.3032 (init= 9.0998), step count (max): 125, lr policy: 0.0002: 44%|####4 | 22000/50000 [01:22<01:44, 267.08it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.3032 (init= 9.0998), step count (max): 125, lr policy: 0.0002: 46%|####6 | 23000/50000 [01:26<01:42, 262.83it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2970 (init= 9.0998), step count (max): 78, lr policy: 0.0002: 46%|####6 | 23000/50000 [01:26<01:42, 262.83it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2970 (init= 9.0998), step count (max): 78, lr policy: 0.0002: 48%|####8 | 24000/50000 [01:29<01:37, 265.77it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2985 (init= 9.0998), step count (max): 113, lr policy: 0.0002: 48%|####8 | 24000/50000 [01:29<01:37, 265.77it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2985 (init= 9.0998), step count (max): 113, lr policy: 0.0002: 50%|##### | 25000/50000 [01:33<01:33, 267.84it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.3044 (init= 9.0998), step count (max): 102, lr policy: 0.0002: 50%|##### | 25000/50000 [01:33<01:33, 267.84it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.3044 (init= 9.0998), step count (max): 102, lr policy: 0.0002: 52%|#####2 | 26000/50000 [01:37<01:29, 269.15it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2937 (init= 9.0998), step count (max): 87, lr policy: 0.0001: 52%|#####2 | 26000/50000 [01:37<01:29, 269.15it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2937 (init= 9.0998), step count (max): 87, lr policy: 0.0001: 54%|#####4 | 27000/50000 [01:41<01:25, 268.28it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2961 (init= 9.0998), step count (max): 70, lr policy: 0.0001: 54%|#####4 | 27000/50000 [01:41<01:25, 268.28it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2961 (init= 9.0998), step count (max): 70, lr policy: 0.0001: 56%|#####6 | 28000/50000 [01:44<01:21, 268.42it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2842 (init= 9.0998), step count (max): 60, lr policy: 0.0001: 56%|#####6 | 28000/50000 [01:44<01:21, 268.42it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2842 (init= 9.0998), step count (max): 60, lr policy: 0.0001: 58%|#####8 | 29000/50000 [01:48<01:17, 269.30it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2952 (init= 9.0998), step count (max): 67, lr policy: 0.0001: 58%|#####8 | 29000/50000 [01:48<01:17, 269.30it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2952 (init= 9.0998), step count (max): 67, lr policy: 0.0001: 60%|###### | 30000/50000 [01:52<01:14, 270.23it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2988 (init= 9.0998), step count (max): 75, lr policy: 0.0001: 60%|###### | 30000/50000 [01:52<01:14, 270.23it/s]

eval cumulative reward: 867.6711 (init: 101.6955), eval step-count: 92, average reward= 9.2988 (init= 9.0998), step count (max): 75, lr policy: 0.0001: 62%|######2 | 31000/50000 [01:55<01:10, 270.84it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.2974 (init= 9.0998), step count (max): 77, lr policy: 0.0001: 62%|######2 | 31000/50000 [01:55<01:10, 270.84it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.2974 (init= 9.0998), step count (max): 77, lr policy: 0.0001: 64%|######4 | 32000/50000 [01:59<01:07, 267.85it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3021 (init= 9.0998), step count (max): 100, lr policy: 0.0001: 64%|######4 | 32000/50000 [01:59<01:07, 267.85it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3021 (init= 9.0998), step count (max): 100, lr policy: 0.0001: 66%|######6 | 33000/50000 [02:03<01:03, 268.88it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3097 (init= 9.0998), step count (max): 175, lr policy: 0.0001: 66%|######6 | 33000/50000 [02:03<01:03, 268.88it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3097 (init= 9.0998), step count (max): 175, lr policy: 0.0001: 68%|######8 | 34000/50000 [02:06<00:59, 270.19it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3168 (init= 9.0998), step count (max): 140, lr policy: 0.0001: 68%|######8 | 34000/50000 [02:06<00:59, 270.19it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3168 (init= 9.0998), step count (max): 140, lr policy: 0.0001: 70%|####### | 35000/50000 [02:10<00:56, 264.93it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3159 (init= 9.0998), step count (max): 117, lr policy: 0.0001: 70%|####### | 35000/50000 [02:10<00:56, 264.93it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3159 (init= 9.0998), step count (max): 117, lr policy: 0.0001: 72%|#######2 | 36000/50000 [02:14<00:52, 267.19it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3155 (init= 9.0998), step count (max): 132, lr policy: 0.0001: 72%|#######2 | 36000/50000 [02:14<00:52, 267.19it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3155 (init= 9.0998), step count (max): 132, lr policy: 0.0001: 74%|#######4 | 37000/50000 [02:18<00:48, 268.67it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3185 (init= 9.0998), step count (max): 118, lr policy: 0.0001: 74%|#######4 | 37000/50000 [02:18<00:48, 268.67it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3185 (init= 9.0998), step count (max): 118, lr policy: 0.0001: 76%|#######6 | 38000/50000 [02:21<00:44, 270.04it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3231 (init= 9.0998), step count (max): 147, lr policy: 0.0000: 76%|#######6 | 38000/50000 [02:21<00:44, 270.04it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3231 (init= 9.0998), step count (max): 147, lr policy: 0.0000: 78%|#######8 | 39000/50000 [02:25<00:40, 270.74it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3233 (init= 9.0998), step count (max): 173, lr policy: 0.0000: 78%|#######8 | 39000/50000 [02:25<00:40, 270.74it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3233 (init= 9.0998), step count (max): 173, lr policy: 0.0000: 80%|######## | 40000/50000 [02:29<00:36, 271.60it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3168 (init= 9.0998), step count (max): 135, lr policy: 0.0000: 80%|######## | 40000/50000 [02:29<00:36, 271.60it/s]

eval cumulative reward: 662.4586 (init: 101.6955), eval step-count: 70, average reward= 9.3168 (init= 9.0998), step count (max): 135, lr policy: 0.0000: 82%|########2 | 41000/50000 [02:32<00:33, 272.21it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3168 (init= 9.0998), step count (max): 135, lr policy: 0.0000: 82%|########2 | 41000/50000 [02:32<00:33, 272.21it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3168 (init= 9.0998), step count (max): 135, lr policy: 0.0000: 84%|########4 | 42000/50000 [02:36<00:29, 270.49it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3261 (init= 9.0998), step count (max): 166, lr policy: 0.0000: 84%|########4 | 42000/50000 [02:36<00:29, 270.49it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3261 (init= 9.0998), step count (max): 166, lr policy: 0.0000: 86%|########6 | 43000/50000 [02:40<00:25, 271.36it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3296 (init= 9.0998), step count (max): 193, lr policy: 0.0000: 86%|########6 | 43000/50000 [02:40<00:25, 271.36it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3296 (init= 9.0998), step count (max): 193, lr policy: 0.0000: 88%|########8 | 44000/50000 [02:43<00:22, 271.95it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3385 (init= 9.0998), step count (max): 182, lr policy: 0.0000: 88%|########8 | 44000/50000 [02:43<00:22, 271.95it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3385 (init= 9.0998), step count (max): 182, lr policy: 0.0000: 90%|######### | 45000/50000 [02:47<00:18, 272.30it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3294 (init= 9.0998), step count (max): 189, lr policy: 0.0000: 90%|######### | 45000/50000 [02:47<00:18, 272.30it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3294 (init= 9.0998), step count (max): 189, lr policy: 0.0000: 92%|#########2| 46000/50000 [02:51<00:15, 266.35it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3320 (init= 9.0998), step count (max): 197, lr policy: 0.0000: 92%|#########2| 46000/50000 [02:51<00:15, 266.35it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3320 (init= 9.0998), step count (max): 197, lr policy: 0.0000: 94%|#########3| 47000/50000 [02:55<00:11, 268.64it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3278 (init= 9.0998), step count (max): 160, lr policy: 0.0000: 94%|#########3| 47000/50000 [02:55<00:11, 268.64it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3278 (init= 9.0998), step count (max): 160, lr policy: 0.0000: 96%|#########6| 48000/50000 [02:58<00:07, 270.15it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3257 (init= 9.0998), step count (max): 162, lr policy: 0.0000: 96%|#########6| 48000/50000 [02:58<00:07, 270.15it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3257 (init= 9.0998), step count (max): 162, lr policy: 0.0000: 98%|#########8| 49000/50000 [03:02<00:03, 271.35it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3230 (init= 9.0998), step count (max): 118, lr policy: 0.0000: 98%|#########8| 49000/50000 [03:02<00:03, 271.35it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3230 (init= 9.0998), step count (max): 118, lr policy: 0.0000: 100%|##########| 50000/50000 [03:06<00:00, 272.31it/s]

eval cumulative reward: 344.6715 (init: 101.6955), eval step-count: 36, average reward= 9.3355 (init= 9.0998), step count (max): 348, lr policy: 0.0000: 100%|##########| 50000/50000 [03:06<00:00, 272.31it/s]

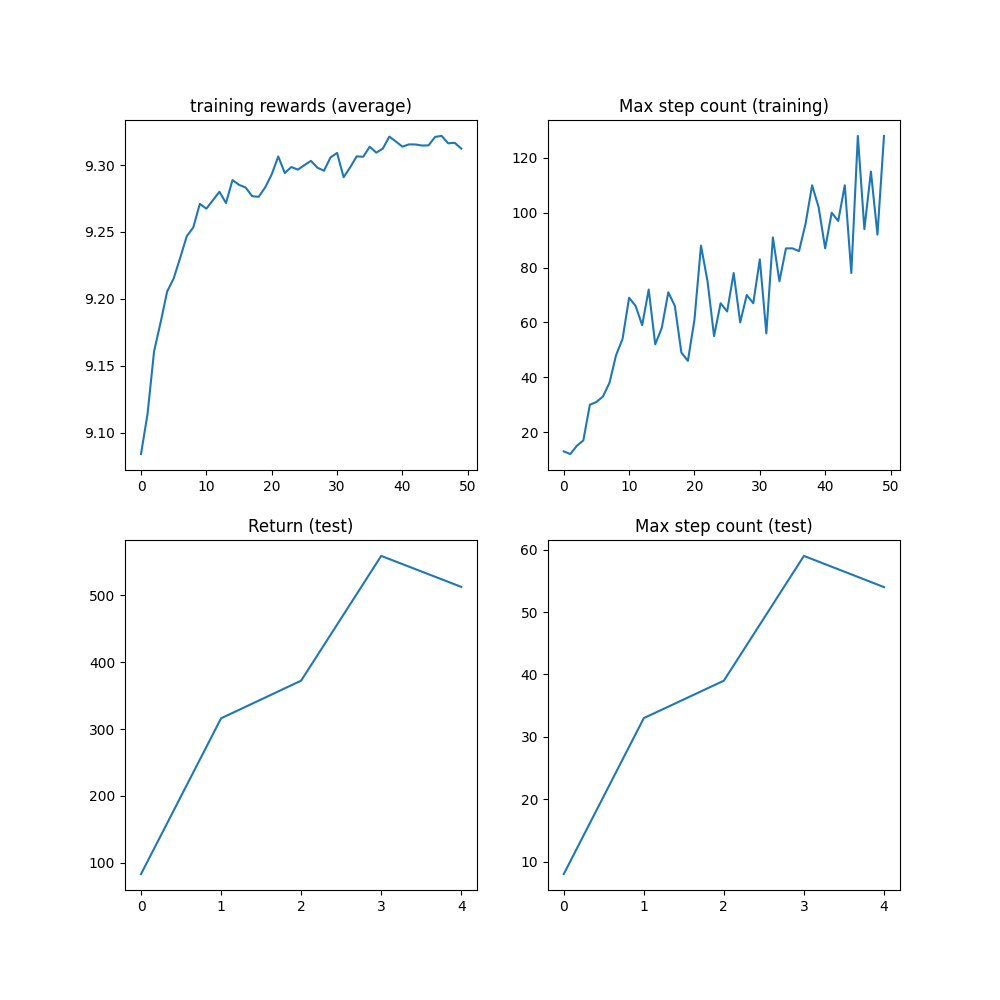

结果¶

在步数上限100万未达到之前,算法应该已经达到了最大步数1000步,这是轨迹被截断前的最大步数。

plt.figure(figsize=(10, 10))

plt.subplot(2, 2, 1)

plt.plot(logs["reward"])

plt.title("training rewards (average)")

plt.subplot(2, 2, 2)

plt.plot(logs["step_count"])

plt.title("Max step count (training)")

plt.subplot(2, 2, 3)

plt.plot(logs["eval reward (sum)"])

plt.title("Return (test)")

plt.subplot(2, 2, 4)

plt.plot(logs["eval step_count"])

plt.title("Max step count (test)")

plt.show()

结论和下一步¶

在本教程中,我们学会了:

如何创建和自定义环境与

torchrl;如何编写模型和损失函数;

设置典型的训练循环步骤。

如果你想进一步实验这个教程,可以进行以下修改:

从效率角度来看, 我们可以并行运行多个模拟以加快数据收集速度。 查看

ParallelEnv获取更多信息。从日志角度来看,可以在请求渲染后向环境添加一个

torchrl.record.VideoRecorder变换,以获得倒立摆动作的视觉渲染。查看torchrl.record以获取更多信息。

脚本总运行时间: (3分钟 7.796秒)